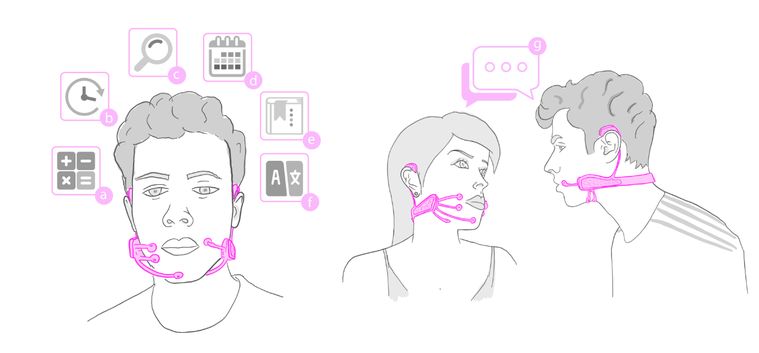

Massachusetts Institute of Technology’s (MIT) Media Lab has developed a personalized wearable silent speech interface named as AlterEgo.

ABSTRACT (by MIT)

We present a wearable interface that allows a user to silently converse with a computing device without any voice or any discernible movements – thereby enabling the user to communicate with devices, AI assistants, applications or other people in a silent, concealed and seamless manner. A user’s intention to speak and internal speech is characterized by neuromuscular signals in internal speech articulators that are captured by the AlterEgo system to reconstruct this speech. We use this to facilitate a natural language user interface, where users can silently communicate in natural language and receive aural output (e.g – bone conduction headphones), thereby enabling a discreet, bi-directional interface with a computing device, and providing a seamless form of intelligence augmentation. The paper describes the architecture, design, implementation and operation of the entire system. We demonstrate robustness of the system through user studies and report 92% median word accuracy levels.

For more infos you can read this scientific MIT document AlterEgo: A Personalized Wearable Silent Speech Interface .